My picks for the best RSS readers are far nicer than Reader ever was.Īll of our best apps roundups are written by humans who've spent much of their careers using, testing, and writing about software. The world of RSS apps has moved on and, a decade later, is actually in a much better place than it likely would have been if Google had remained at the top. While it's still traditional to bemoan the death of Google Reader all the way back in 2013 in any article about RSS, I'll skip the eulogy. You just open your RSS app and get reading, with every article and blog post presented in reverse chronological order. Still, it remains the absolute best way to combine stuff from loads of different places into one central app, where you can read it without having to click around a bunch of sites or scroll through your social feeds. Although pretty much every podcast app relies on RSS, it isn't as publicly popular as it used to be.

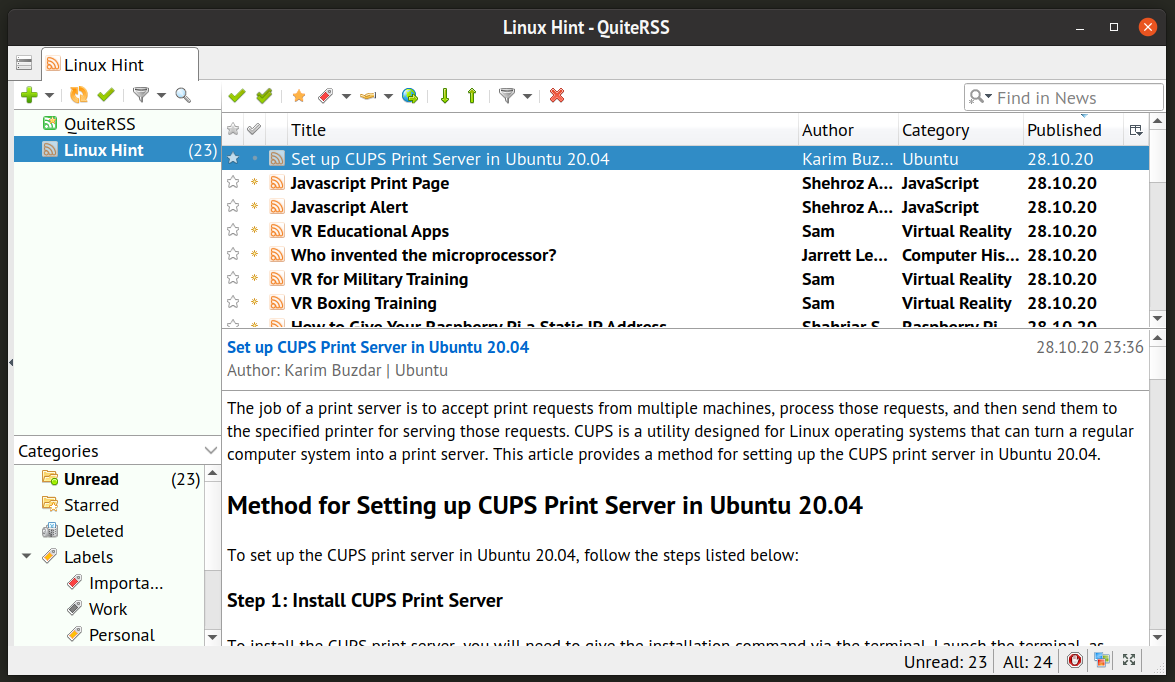

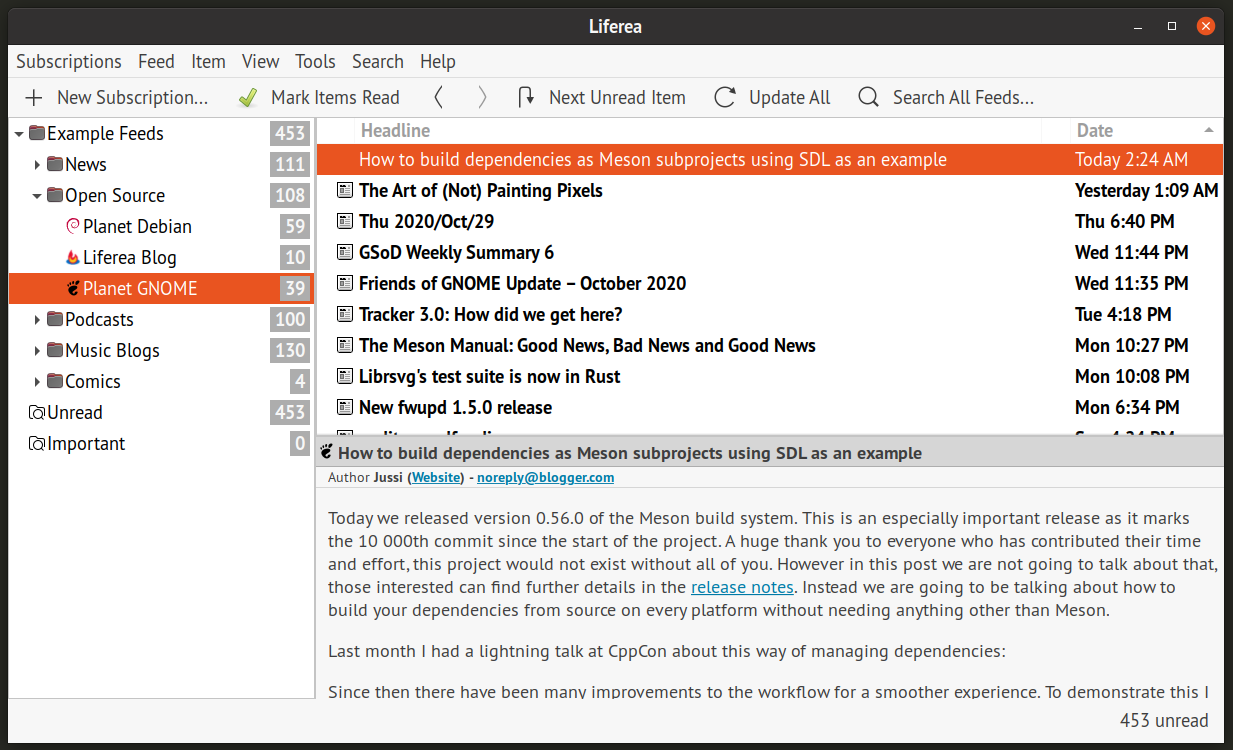

That’s why we build and open-source resources that researchers can use to analyze models and the data on which they’re trained why we’ve scrutinized LaMDA at every step of its development and why we’ll continue to do so as we work to incorporate conversational abilities into more of our products.RSS (it stands for Really Simple Syndication) has been around since the '90s, and it's a way for sites to publish a feed of all their content in a way that can be easily parsed and aggregated by RSS apps. We're deeply familiar with issues involved with machine learning models, such as unfair bias, as we’ve been researching and developing these technologies for many years. Our highest priority, when creating technologies like LaMDA, is working to ensure we minimize such risks. And even when the language it’s trained on is carefully vetted, the model itself can still be put to ill use. Models trained on language can propagate that misuse - for instance, by internalizing biases, mirroring hateful speech, or replicating misleading information. Language might be one of humanity’s greatest tools, but like all tools it can be misused. Being Google, we also care a lot about factuality (that is, whether LaMDA sticks to facts, something language models often struggle with), and are investigating ways to ensure LaMDA’s responses aren’t just compelling but correct.īut the most important question we ask ourselves when it comes to our technologies is whether they adhere to our AI Principles. We’re also exploring dimensions like “interestingness,” by assessing whether responses are insightful, unexpected or witty. These early results are encouraging, and we look forward to sharing more soon, but sensibleness and specificity aren’t the only qualities we’re looking for in models like LaMDA. Since then, we’ve also found that, once trained, LaMDA can be fine-tuned to significantly improve the sensibleness and specificity of its responses. LaMDA builds on earlier Google research, published in 2020, that showed Transformer-based language models trained on dialogue could learn to talk about virtually anything. In the example above, the response is sensible and specific. Satisfying responses also tend to be specific, by relating clearly to the context of the conversation. After all, the phrase “that’s nice” is a sensible response to nearly any statement, much in the way “I don’t know” is a sensible response to most questions.

But sensibleness isn’t the only thing that makes a good response. That response makes sense, given the initial statement. “How exciting! My mom has a vintage Martin that she loves to play.” You might expect another person to respond with something like: Basically: Does the response to a given conversational context make sense? For instance, if someone says: During its training, it picked up on several of the nuances that distinguish open-ended conversation from other forms of language. That architecture produces a model that can be trained to read many words (a sentence or paragraph, for example), pay attention to how those words relate to one another and then predict what words it thinks will come next.īut unlike most other language models, LaMDA was trained on dialogue. Like many recent language models, including BERT and GPT-3, it’s built on Transformer, a neural network architecture that Google Research invented and open-sourced in 2017. LaMDA’s conversational skills have been years in the making.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed